Is it possible to teach robots human language?

Is it possible to teach robots human language?

1 Answer

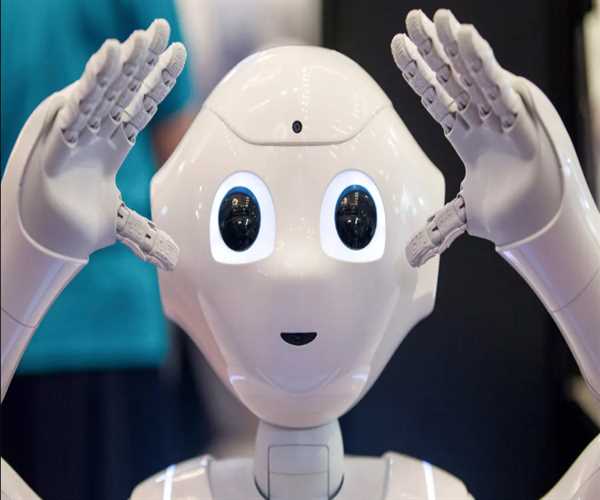

Robots are increasingly becoming a staple in our society, from the Roomba vacuum cleaners to the advanced industrial machines used in factories. With their growing ubiquity, the question of whether or not robots can be taught human language is becoming increasingly relevant.

There are several approaches to this question.

The first is to consider whether or not robots can be programmed to understand human language. This is a fairly straightforward question to answer, as there are already many examples of robots that can successfully interpret human language.

For instance, Google Translate is able to take input in one language and translate it into another with a high degree of accuracy.

However, understanding human language is not the same as being able to speak it. While there are some robots that are able to generate speech, they are not yet able to hold a conversation in the same way that a human can. This is due to the fact that human conversation is highly contextual and often relies on nonverbal cues, such as body language and tone of voice, that robots are not yet able to interpret.

The second way to approach the question of whether or not robots can be taught human language is to consider whether or not they can learn it. This is a more difficult question to answer, as it is not yet clear how much of human language is learned as opposed to being innate. However, there are some promising signs that robots may someday be able to learn human language.

For instance, recent research has shown that robots can learn to interpret the meaning of words by observing the way that humans use them. This is a significant step forward, as it suggests that robots could eventually learn to interpret the context of conversations and use language in more natural ways.

So, can robots be taught human language?

The answer to this question is still unclear. However, the signs are promising that they may someday be able to learn and use human language in ways that are very similar to the way that humans do.